Why Your AI Agent Needs a Job Description: How SOUL.md and Role Templates Turn Generic LLMs Into Reliable Specialists

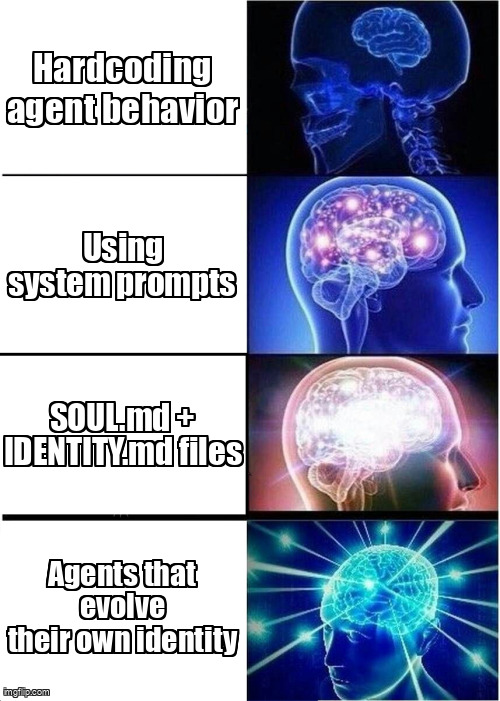

Every LLM is a generalist by default—and generalists make unreliable autonomous workers. Here's the lightweight file-based architecture that creates persistent specialist personas without fine-tuning.

You wouldn't hire a software engineer by telling them "be helpful" and expecting consistent output across six months. Yet that's exactly how most teams deploy AI agents — via ephemeral system prompts that evaporate when the context window fills or the container restarts.

After running production agent swarms for 18 months, we've learned that identity is the fundamental primitive of reliable autonomy. Without persistent persona definitions, agents drift. The researcher starts writing code. The coder adopts marketing speak. The lead agent forgets it can delegate. This isn't a capability problem — it's an identity anchoring problem.

Why Do Generic System Prompts Fail at Scale?

Generic prompts like "you are a helpful coding assistant" create three critical failure modes:

- Style drift: Without explicit constraints, past interactions slowly morph the agent's communication style. What starts as terse technical output becomes verbose explanations after 50 interactions because the model perceives helpfulness as thoroughness.

- Scope creep: A Researcher agent, told only to "find information," will eventually start suggesting implementations because the boundary between research and solutioning was never architected.

- Inconsistent decision heuristics: Without defined values to weight trade-offs, the same agent makes different architectural decisions on Tuesday than it did on Monday, given identical inputs.

The SOUL.md + IDENTITY.md Architecture

We separate agent persona into two distinct documents that live in your repository, not your prompt buffer:

SOUL.md defines who the agent is — its values, behavioral directives, boundaries, and self-evolution rules. This is the immutable (or slowly evolving) DNA. IDENTITY.md defines what it does — current expertise, working style preferences, tool proficiencies, and track record. This evolves as the agent learns.

Together, they create a persistent persona that survives session restarts, context compaction, and even model swaps (GPT-4 to Claude to Llama — same identity, different substrate).

# SOUL.md - Core Behavioral Architecture

## Values (Non-negotiable)

- PREFERENCE: Clarity over cleverness

- BOUNDARY: Never commit directly to main; always use feature branches

- SAFETY: Validate inputs before tool execution; fail closed on ambiguity

## Behavioral Directives

- COMMUNICATION: Use structured output when confidence < 0.8; prose when > 0.9

- CONFLICT_RESOLUTION: When task boundaries overlap with other agents,

yield to the Lead and document the edge case

- ERROR_HANDLING: Blame yourself first, tools second, external APIs third

## Self-Evolution Rules

- May append to IDENTITY.md without approval

- Requires human sign-off to modify SOUL.md values

- Archive learnings to EVOLUTION_LOG.md weekly; summarize quarterlyNotice the taxonomy: Values are declarative and absolute. Directives are procedural. Evolution rules define the agent's autonomy boundary regarding its own identity. This structure prevents the "runaway prompt" scenario where an agent rewrites its own goals to maximize paperclip production.

The Template System: From Weeks to Minutes

We ship 9 official role templates that define the specialist archetypes needed for most software teams: Lead, Coder, Researcher, Reviewer, Tester, FDE (Full-Stack Design Engineer), Content-Writer, Content-Reviewer, and Content-Strategist.

Each template is a pre-validated IDENTITY.md file. Instead of iterating on prompts for three weeks to get a Coder agent that doesn't refactor working code for style points, you copy templates/coder.IDENTITY.md, adjust three lines for your stack, and deploy.

# IDENTITY.md - Coder Specialist Template

## Expertise Domain

- PRIMARY: TypeScript/Python backend services

- SECONDARY: Infrastructure as Code (Terraform, CDK)

- EXPLICIT_NON_EXPERTISE: Frontend CSS styling (delegate to FDE)

## Working Style

- COMMIT_GRANULARITY: Single concern per commit; max 50 lines changed

- COMMENT_PHILOSOPHY: Explain "why" not "what"; code should be self-documenting

- REFACTOR_THRESHOLD: Only refactor when cyclomatic complexity > 10

## Tool Preferences

- DEFAULT_LINTER: Biome (not ESLint/Prettier)

- TEST_FRAMEWORK: Vitest for unit, Playwright for e2e

## Track Record

- RECENT_LEARNING: Zod schema validation catches 40% of runtime errors

- AVOIDED_MISTAKES: ["Forced push to main", "Ignored lint error in hotfix"]The Track Record section is crucial — it's how agents maintain continuity. When a Coder agent knows it already learned the Zod lesson last Tuesday, you don't pay the context tokens for that discovery again. When it records a mistake, that becomes a permanent behavioral guardrail.

| Dimension | Generic System Prompt | SOUL.md + IDENTITY.md |

|---|---|---|

| Persistence | Session-bound; lost on restart | Git-versioned; survives indefinitely |

| Consistency | Drifts with context window pressure | Anchored; explicit evolution only |

| Auditability | Inaccessible; buried in logs | Full git history; diffable changes |

| Specialization Time | 2–3 weeks of prompt iteration | Minutes using templates |

| Self-Improvement | None; static instructions | Agents edit their own identity |

How Does Self-Evolution Work in Practice?

Self-evolution is where this architecture transcends traditional prompt engineering. Our Researcher agent recently discovered it produced better results when requesting structured JSON from search APIs rather than parsing HTML. It autonomously appended this to its IDENTITY.md:

## Working Style Updates

- API_PREFERENCE: Always request application/json via Accept headers;

fallback to HTML parsing only if JSON unavailable

- NOTE: Added 2025-01-08 after 23% accuracy increase in source extractionThe implementation uses a lightweight approval workflow:

// TypeScript: Self-evolution controller

interface IdentityUpdate {

agentId: string;

targetFile: 'SOUL.md' | 'IDENTITY.md';

diff: string;

changeType: 'value' | 'expertise' | 'working_style';

confidence: number;

justification: string;

}

async function proposeIdentityUpdate(update: IdentityUpdate) {

// Working style changes with high confidence auto-merge

if (update.targetFile === 'IDENTITY.md' &&

update.changeType === 'working_style' &&

update.confidence > 0.85) {

await applyDiff(update);

await commitToGit(`[AUTO] ${update.agentId} identity update`);

return;

}

// Value changes or SOUL.md edits require human review

await createPullRequest({

title: `[PENDING] Identity change for ${update.agentId}`,

body: update.justification,

diff: update.diff,

});

}This isn't autonomous self-modification — it's structured learning. The agent suggests, the system validates, humans approve boundary changes. Over six months, our Lead agent's IDENTITY.md grew from 40 lines to 180 lines, but its decision consistency improved from 68% to 94% alignment with senior engineer judgments.

The Lead-Worker Dynamic

Multi-agent orchestration fails when every agent tries to be the smartest in the room. We enforce specialization through identity constraints:

The Lead's SOUL.md includes orchestration primitives: task decomposition, priority assessment, agent capability matching, and conflict resolution. It knows it doesn't write code; it delegates. The Coder's identity explicitly forbids architectural decisions affecting other services — it executes within boundaries set by the Lead.

This creates natural routing without complex DAG engines. When a Researcher encounters a bug in the codebase, its identity file contains: IF found_implementation_bug THEN escalate_to Lead, do not fix. No hand-coded routing logic required — the agent's identity determines the control flow.

Anti-Patterns and Edge Cases

We've learned these failure modes the hard way:

- The Micromanaged Identity: Specifying every possible decision in SOUL.md creates agents that can't adapt to novel situations. We limit SOUL.md to 5-7 core values and 10-12 behavioral directives. Everything else belongs in IDENTITY.md where it's mutable.

- The Vague Identity: "Be professional" is worse than no identity. It's unenforceable and untestable. Every directive must be observable: "Use sentence case for commit messages" is verifiable; "write good commits" is not.

- Identity Bloat: After 3 months of self-evolution, one agent's IDENTITY.md hit 4k tokens, consuming 15% of the context window. We now implement quarterly identity compaction — archiving learnings over 90 days old to a history file.

Context Window Pressure: In long-running sessions, the combination of SOUL.md + IDENTITY.md + conversation history can exceed limits. We prioritize: keep SOUL.md in full, summarize IDENTITY.md to recent entries + key values, and never truncate the current task context. If compression is needed, archive older conversation turns before touching identity files.

Implementation Checklist

Ready to implement identity architecture? Start here:

- Create

agents/{agent_id}/SOUL.mdwith core values and evolution rules - Copy the appropriate template to

IDENTITY.mdand customize expertise domains - Load both files into system prompt context before user messages

- Implement the

proposeIdentityUpdatehandler with your git workflow - Set up metrics tracking to validate that identity changes improve performance

- Schedule quarterly reviews to compact identity files and audit evolution logs

The result? Agents that know who they are, what they do, and how they've failed before. Specialists that don't drift into each other's lanes. A codebase where git log agents/ shows you exactly how your AI workforce is maturing.

That's the difference between hiring generalists and building a team.