Stop Fighting Context Window Limits — Design for Compaction Instead

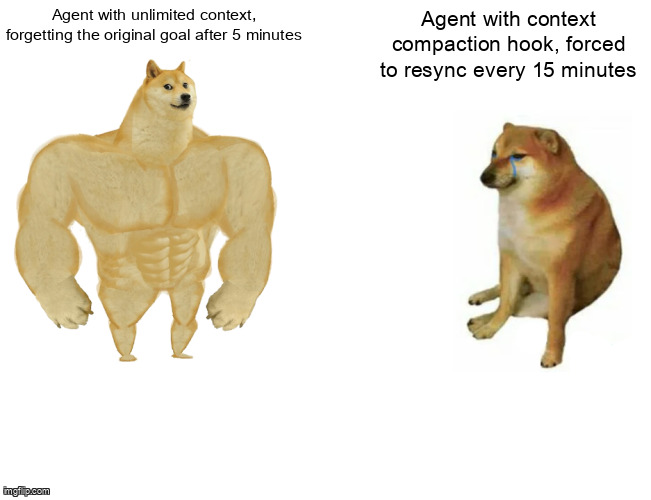

Our agents started performing better after we stopped trying to avoid compaction and started treating it as a feature.

Six months ago, our agents were drowning. Not in errors—in context. We were running web automation tasks that could last 45 minutes, execute 3,000+ tool calls, and accumulate conversation histories that would make a novelist weep. The obvious answer was bigger context windows. So we paid for 200K tokens. Then our completion rates dropped.

This is the story of why that happened, and how we built a system that treats context compaction as a first-class primitive instead of a failure mode to avoid.

The Counterintuitive Discovery

Here’s the pattern that broke our assumptions. We had an agent tasked with competitive analysis: scrape pricing from 150 competitor websites, normalize the data, and generate a comparison report. With our previous architecture, we tried desperately to keep the full conversation history intact. Summaries, hierarchical memory, selective eviction—we tried it all. The agent would still drift. Around minute 30, it would start asking clarifying questions we’d answered in minute 2. By minute 40, it would regenerate the full progress report from scratch, unaware we’d already built one.

The fix came from a place of resignation. We couldn’t prevent compaction, so we decided to make it happen well. We built a PreCompact hook that fires immediately before the context window truncates. Here’s what it actually does:

// From src/core/hooks/lifecycle.ts

export interface CompactionContext {

taskId: string;

originalGoal: string;

currentProgress: ProgressSnapshot;

toolCallCount: number;

compactCount: number; // How many times we've compacted

}

export async function executePreCompact(

agent: AgentInstance,

ctx: CompactionContext

): Promise<InjectedMessage[]> {

// This runs synchronously before truncation

const reminder = await buildGoalReminder(ctx);

return [

{

role: 'system',

content: `=== CONTEXT SYNCHRONIZATION ===

Original task (${ctx.compactCount + 1} compaction cycles elapsed):

${ctx.originalGoal}

Current progress (verified from persistent store):

- Completed: ${ctx.currentProgress.completedSteps.join(', ')}

- Current focus: ${ctx.currentProgress.currentStep}

- Remaining: ${ctx.currentProgress.remainingSteps}

- Data collected: ${ctx.currentProgress.keyFindings.length} items

=== END SYNCHRONIZATION ===`,

metadata: { type: 'precompact_sync', compactIndex: ctx.compactCount }

}

];

}

// The injection happens atomically with compaction

export class ContextManager {

async compactIfNeeded(agentId: string): Promise<CompactionResult> {

const currentTokens = await this.getTokenCount(agentId);

const threshold = this.config.compactionThreshold; // typically 85% of max

if (currentTokens < threshold) return { compacted: false };

// CRITICAL: Fetch state BEFORE truncation

const taskState = await this.fetchCurrentTaskState(agentId);

const preCompactMessages = await executePreCompact(agentId, taskState);

// Inject, then truncate everything before our sync message

await this.injectMessages(agentId, preCompactMessages);

const newHistory = await this.truncatePreservingTail(

agentId, preCompactMessages.length

);

return {

compacted: true,

preCompactTokens: currentTokens,

postCompactTokens: await this.getTokenCount(agentId),

syncMessageCount: preCompactMessages.length

};

}

}The results were immediate and confusing. Tasks that previously failed at 67% completion (our old metric) suddenly started finishing. But here’s what was strange: the agents weren’t just surviving compaction. They were snapping back to coherence—like someone shaking you awake when you’ve been staring blankly at a screen for too long.

We realized we’d accidentally built a synchronization checkpoint. Every compaction event became a forced re-read of the original goal and current progress. The agent couldn’t drift for more than ~15 minutes (our compaction interval at current token rates) without getting yanked back to first principles.

Why Bigger Context Windows Made Everything Worse

“Context fatigue is real. With 200K tokens of accumulated conversation, our agents started behaving like someone trying to work while carrying a backpack full of every conversation they’d ever had.”

When we upgraded to 200K token windows, we expected linear improvement. Instead we got three pathologies that only manifested at scale:

- Stale reference poisoning: The agent would cite tool outputs from call #47 in the context of call #2,400. The information was technically in the window, but it was 43 minutes and 2,353 tool calls stale. The agent couldn’t distinguish recent from ancient.

- Work repetition: Without compaction forcing a progress review, agents would re-derive solutions they’d already found. We saw pricing analysis agents regenerate comparison matrices three times in a single session, each time convinced they were doing it for the first time.

- Objective dissolution: The original goal—literally the first system message—would get buried under 800 lines of tool output. The agent would start improvising sub-tasks that felt related but didn’t serve the actual objective.

The paradox: giving the agent more memory made it forget what mattered. Compaction, by brutally truncating everything except our injected synchronization message, forced the agent to carry only what was essential.

What Doesn’t Work: Summary-Based Memory

Before arriving at the PreCompact hook, we tried the obvious approach: incremental summarization. Every N tokens, summarize the conversation so far and replace the raw history with the summary. It sounds elegant. It was disastrous.

The problem is lossiness without intent. A summary of 10,000 tokens of tool output necessarily discards information. But which information? The summarizer (usually the same LLM) doesn’t know what will be relevant downstream. We saw agents lose track of specific error codes because the summary deemed them “implementation details.” We saw agents repeat failed approaches because the summary said “attempted X” but not “attempted X and it failed with error Y.”

Summary-based memory is compression without a format. The PreCompact approach is different: it’s lossy by design, but the structure of what survives is guaranteed. Original goal: preserved. Current progress: preserved. Key findings: preserved. Everything else? If it’s important, it should be in external state, not floating in context history.

The Compaction-Aware Architecture

Three patterns from our production system that make this reliable:

Pattern 1: Goal Injection Structure

The PreCompact hook fetches from two sources: the task definition (immutable) and the progress store (mutable). This distinction matters. The original goal never changes. Current progress updates frequently through explicit storeProgress tool calls:

// From src/tools/progress.ts

export const storeProgress = defineTool({

name: 'storeProgress',

description: 'Atomically persist progress to survive compaction',

parameters: z.object({

completedSteps: z.array(z.string()),

currentStep: z.string(),

remainingSteps: z.array(z.string()),

keyFindings: z.array(z.object({

key: z.string(),

value: z.string(),

source: z.string() // Which tool call produced this

})),

checkpointId: z.string().optional()

}),

handler: async (params, ctx) => {

await ctx.db.taskProgress.upsert({

where: { taskId: ctx.taskId },

update: {

...params,

updatedAt: new Date(),

compactCount: ctx.currentCompactCount

},

create: {

taskId: ctx.taskId,

...params,

compactCount: 0

}

});

return { stored: true, checkpointId: params.checkpointId };

}

});Pattern 2: Context Snapshots

We track every compaction event in a dedicated table. This isn’t for debugging—it’s for the task scheduler to make routing decisions:

-- From prisma/schema.prisma

model TaskContextSnapshot {

id String @id @default(cuid())

taskId String

// Compaction metrics

compactCount Int // How many times this task has compacted

preCompactTokens Int // Tokens before this compaction

postCompactTokens Int // Tokens after (should be ~20% of max)

peakContextPercent Float // Highest % of context used during this cycle

// Recovery data

syncMessageLength Int // Characters in our injected sync

progressCheckpoints Json // Array of checkpointIds from storeProgress

// Timing

compactedAt DateTime @default(now())

@@index([taskId, compactCount])

@@index([peakContextPercent]) // For identifying undertasked agents

}

// Usage: tasks with compactCount=0 and peakContextPercent<30%

// are flagged as "undertasked"—they could do more work per cyclePattern 3: Unthrottled Compaction Logging

Regular context readings are throttled (we poll every 5 seconds during busy periods). But compaction events are logged synchronously, unthrottled, with full pre/post state. You cannot afford to miss a compaction—it means your external state and context state have desynchronized.

// From src/core/logging/telemetry.ts

export async function logCompactionEvent(

agentId: string,

result: CompactionResult

): Promise<void> {

// NEVER throttle this. Use synchronous write path.

const event: CompactionTelemetry = {

type: 'compaction',

agentId,

timestamp: Date.now(),

preCompactTokens: result.preCompactTokens,

postCompactTokens: result.postCompactTokens,

tokenReduction: result.preCompactTokens - result.postCompactTokens,

syncMessage: result.injectedMessages

};

// Direct write to telemetry, bypasses normal batching

await telemetry.writeImmediate(event);

// Also update the task-level aggregate

await updateTaskCompactionMetrics(agentId, event);

}What The Data Actually Shows

We categorize task outcomes by compaction behavior. Here’s what we found running ~12,000 tasks over three weeks:

| Compaction Profile | Task Count | Completion Rate | Avg Duration |

|---|---|---|---|

| Zero compactions | 2,847 | 61% | 8.3 min |

| 1-2 compactions | 4,193 | 84% | 23.7 min |

| 3-4 compactions | 3,956 | 79% | 41.2 min |

| 5+ compactions | 1,004 | 54% | 67.8 min |

Completion = task finished with validateable output. Does not include tasks killed by timeout or circuit breaker.

The sweet spot is obvious: 1-2 compactions. These are tasks large enough to need meaningful work, but bounded enough to maintain coherence. Zero-compaction tasks often fail because they’re actually too small—agents get undertasked and start inventing scope. Five-plus compaction tasks fail because the complexity exceeds what can be tracked across that many synchronization points.

This data changed how we design tasks. Instead of asking “can this fit in context?” we ask “will this complete in 2-3 compaction cycles?” If the answer is no, we decompose. The context window becomes a design constraint, not a resource to maximize.

How Do I Structure Prompts for Compaction?

Keep your store-progress calls frequent. They survive compaction as API-side state. Use structured output schemas so downstream consumers don’t depend on context-window-resident information. Design tasks to be completable in 2-3 compaction cycles.

Specifically: your agent should call storeProgress after every significant state change—not just at the end. We recommend at minimum: after each major tool category (data collection → analysis → synthesis), after any error recovery, and before any operation that might trigger multiple tool calls.

// Anti-pattern: Storing everything at the end

async function badApproach(): Promise<Result> {

const data = await collectData(); // 500 tool calls

const analyzed = await analyze(data); // 200 tool calls

const report = await generate(analyzed); // 100 tool calls

// Only store at the end!

await storeProgress({

completedSteps: ['collect', 'analyze', 'generate'],

...

});

return report;

}

// Pattern: Checkpointing throughout

async function goodApproach(): Promise<Result> {

const data = await collectData();

await storeProgress({

completedSteps: ['collect'],

currentStep: 'analyze',

keyFindings: extractFindings(data)

});

const analyzed = await analyze(data);

await storeProgress({

completedSteps: ['collect', 'analyze'],

currentStep: 'generate',

keyFindings: [...previous, ...extractAnalysis(analyzed)]

});

// If compaction happens here, we resume with full knowledge

const report = await generate(analyzed);

await storeProgress({ completedSteps: ['collect', 'analyze', 'generate'] });

return report;

}The Prediction

Within 18 months, “compaction-first” agent design will be as standard as “mobile-first” web design was in 2015. Not because it’s elegant—because the economics are inexorable.

Infinite context sounds like a solution until you do the math. At current rates, 200K tokens costs roughly $1-2 per request. For an agent running continuously with 4K token output per step, that’s $1-2 every 50 steps. A 3,000-step task (not unusual for our workloads) becomes $60-120 in context costs alone. And that’s assuming you want all that context—which, as we’ve shown, you don’t.

The alternative: compact aggressively, store in external state (DynamoDB, Postgres, whatever—costs pennies per million operations), and pay for tokens you actually use. With 32K effective context and strategic compaction, the same 3,000-step task costs under $5.

The technical argument is simpler: agents work better when they’re forced to re-synchronize periodically. The financial argument is overwhelming. The only reason we’re not all designing this way is inertia—we’re still thinking of context as memory to preserve rather than a working set to curate.

Start With One Hook

If you’re building long-running agents, don’t wait for your context limit to bite. Implement a PreCompact hook today. It costs nothing if you’re not hitting limits yet, and it transforms a future emergency into a controlled synchronization point.

The code above is real—it’s running in production, handling tasks that run for hours and call thousands of tools. The patterns work because they acknowledge something fundamental: context windows aren’t memory. They’re attention. And attention, by nature, must be focused.

Stop fighting the limit. Design for it.