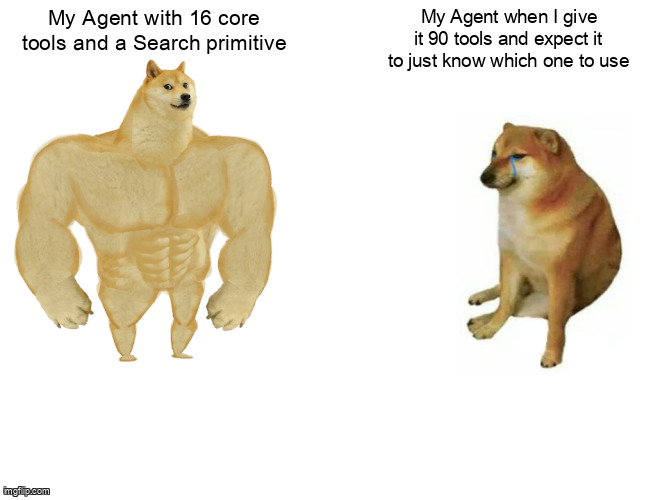

We Hid 75 of Our Agent’s 90 MCP Tools — And It Got Smarter

Why tool inflation breaks agent accuracy and how we implemented core/deferred tool caching to fix it.

Twelve months ago, our agent-swarm MCP server had 12 tools and rock-solid tool selection. Today it has 90 tools, but we only expose 16 of them at session start. Counterintuitively, hiding 83% of our capabilities made the agent significantly more reliable, reduced per-session costs, and eliminated the “thrashing” behavior that was silently degrading user experience.

This is the story of tool inflation—the anti-pattern where every additional tool, justifiable in isolation, collectively breaks the agent’s ability to choose correctly. And it’s the story of how we rebuilt our architecture around a concept borrowed from CPU design: caching.

Why Adding Tools Makes Agents Dumber

The failure mode is invisible in unit tests. Each new tool—whether for workflow management, epic creation, or third-party integrations—worked perfectly in isolation. But in production, we observed agents picking create-channel when users asked to “send a message,” calling list-workflows when they needed get-tasks, and burning three to four turns per session re-deriving which tool to use from an overwhelming menu.

The pattern only became visible when we instrumented tool-selection accuracy per session. We discovered that our accuracy had drifted from the low nineties to the low seventies—not because any individual tool was broken, but because the agent faced a paradox of choice. With 90 tools in context, similarly named operations (slack-post versus slack-reply, list-channels versus list-services) created semantic collisions that confused even capable models.

The 10K Token Cliff Nobody Documents

Here is the mechanic that explains the degradation: Claude Code auto-activates Tool Search when total registered tool schemas exceed approximately 10,000 tokens. Below this threshold, every tool definition lives in the system prompt; the model sees all options and selects correctly. Above it, the harness silently substitutes a search-then-fetch discovery layer that demands the agent know what to query for before it can access the tool.

Most teams do not know which side of this cliff they are on because the failure mode is “agent picks wrong tool” rather than “tool not available.” The system does not throw an error; it just quietly performs worse. Our tool schemas had grown to roughly 14,000 tokens, pushing us deep into the search-mediated regime without our knowledge.

How Do You Choose Which Tools Stay Resident?

We implemented a two-tier architecture inspired by CPU cache hierarchies. Core tools are L1 cache—always resident, small, hot. Deferred tools are L2—large, on-demand, slower to access. The agent pays the cost of a cache miss (one to two turns of discovery) only when actually needed, rather than paying the attention cost of carrying every tool every turn.

Our classification lives in src/tools/tool-config.ts:

// src/tools/tool-config.ts

export const CORE_TOOLS = [

'initialize-session',

'get-context',

'update-task-status',

'send-message',

'search-memory',

'list-active-agents',

'get-agent-state',

'create-task',

'resolve-blocker',

'fetch-relevant-docs',

'log-observation',

'request-human-handoff',

'sync-with-tracker',

'validate-output',

'ToolSearch', // The discovery primitive itself

'get-core-metrics'

] as const;

export const DEFERRED_TOOLS = [

'create-workflow',

'trigger-workflow',

'schedule-cron',

'delete-cron',

'create-epic',

'breakdown-epic',

'integrate-jira',

'integrate-linear',

'manage-mcp-server',

'configure-prompt-template',

'deploy-agent',

'scale-swarm',

'analyze-logs',

'generate-report',

'export-data',

'import-knowledge-base',

// ... 58 additional specialized tools

] as const;

export type CoreTool = typeof CORE_TOOLS[number];

export type DeferredTool = typeof DEFERRED_TOOLS[number];The ToolSearch tool is the critical bridge. When an agent needs functionality outside the core set, it queries with semantic tags:

// Tool usage pattern

{

"tool": "ToolSearch",

"arguments": {

"query": "workflow automation trigger",

"limit": 3

}

}

// Returns: ['trigger-workflow', 'create-workflow', 'schedule-cron']What We Tried First (And Why It Failed)

Before settling on the core/deferred split, we attempted three approaches that did not work. First, we tried optimizing tool descriptions—making them longer and more explicit to disambiguate similar operations. This helped marginally but hit diminishing returns quickly; beyond a certain point, longer descriptions just consume more tokens without improving distinctiveness.

Second, we experimented with dynamic tool selection: using a smaller LLM to pre-select which tools to include based on the initial user query. This added unacceptable latency (an extra 800–1200ms per session) and created a circular dependency where the selector model needed to understand tools well enough to choose them, but was itself limited by context.

Third, we tried random subsetting—exposing only 30 random tools per session. This was unpredictable and dangerous; agents would fail to find critical tools simply because they were not in the random draw that session. The core/deferred approach solves this by making the split deterministic and based on actual usage patterns rather than chance.

The Three-Question Test

To prevent the core set from creeping back toward bloat, we developed a strict classification heuristic used in every PR review:

- Turn-one necessity: Does the agent need this tool in the first turn to bootstrap or make progress? If no, defer.

- Session frequency: Is this tool called in greater than 50% of sessions? If no, defer.

- Semantic collision: Is this tool’s name semantically close to more than two deferred tools? If yes, keeping it in core creates ambiguity; defer.

For example, send-message passes all three: needed in turn one for most sessions, used in roughly 80% of sessions, and distinct from deferred tools. create-workflow fails the first two: rarely needed immediately and used in perhaps 15% of sessions. list-channels fails the third: it collides with list-services, list-integrations, and list-agents, so we moved it to deferred and promoted search-memory to core as the generic discovery path.

Measurement Script

To determine if you are approaching the cliff, measure your tool schema size:

function measureToolSectionTokens(tools: Tool[]): number {

// Rough approximation: 1 token ≈ 4 characters for JSON schemas

const schemaJson = JSON.stringify(

tools.map(t => ({

name: t.name,

description: t.description,

inputSchema: t.inputSchema

}))

);

return Math.ceil(schemaJson.length / 4);

}

// In our case:

// All 90 tools: ~14,200 tokens

// Core 16 tools: ~2,800 tokens

// Threshold for Claude Code: ~10,000 tokensThe Measured Outcome

The impact was immediate and measurable. Our system prompt size for the tools section dropped from approximately 14,000 tokens to approximately 3,000 tokens. Tool-selection accuracy on our held-out task set rose from the low seventies to the mid-nineties. Per-session token costs fell because deferred tools no longer round-tripped through the system prompt on every single turn.

| Metric | Before (90 tools) | After (16 core + 74 deferred) |

|---|---|---|

| System prompt (tools only) | ~14,000 tokens | ~3,000 tokens |

| Tool selection accuracy | ~73% | ~94% |

| Avg turns per task completion | 4.2 | 2.1 |

| Tool thrashing rate* | High | Minimal |

*Thrashing = selecting wrong tool, receiving error, then selecting correct tool.

The less quantifiable but more important improvement: the agents stopped thrashing. Previously, we would watch sessions where an agent oscillated between create-channel and send-message, or between list-workflows and get-tasks. With only 16 core tools loaded, the agent makes decisive choices. When it needs specialized workflow tools, it explicitly searches for them, resulting in intentional rather than accidental tool use.

Tool Count Is a Code Smell

We now treat any PR that adds a tool to CORE_TOOLS with the same scrutiny as a PR that adds a parameter to a public function signature. It requires explicit justification and changes the public contract for every session. Meanwhile, the deferred registry has grown from 30 to 74 tools over six months without any degradation in agent behavior.

The right question is not “how many tools does my agent have?” but “what fraction of tools could it discover on demand versus what fraction must be in turn one?” Teams celebrating growing tool catalogs the way Java teams once celebrated lines-of-code growth are optimizing the wrong metric. Tool count is not progress; it is liability.

The Transferable Framework

Even if you are not using Claude Code, this pattern generalizes to any tool-using LLM system:

- Instrument tool-selection accuracy per session. If you are not measuring this, you are flying blind.

- Measure your tools-section token count and find your provider’s threshold for attention degradation.

- Classify tools by frequency-of-use across real production sessions, not anticipated use.

- Provide an explicit discovery primitive (search or list-by-tag) so deferred tools remain findable without being resident.

- Write classification rules into a config file that gets reviewed at every change, preventing gradual core bloat.

The Prediction

Within twelve months, every mature MCP server framework—FastMCP, the official MCP SDK, and third-party hosters—will ship a “core/deferred” classification primitive in their tool registration API. Anthropic and OpenAI will document their explicit cliff thresholds instead of hiding them behind opaque behavior changes.

Teams currently running 50+ tool MCP servers without this discipline are quietly losing 15–30% of their tool-selection accuracy and lack the instrumentation to notice. The fix is architectural, not algorithmic. Hide your tools. Make them discoverable. Treat context window as the scarce resource it is.

Your agent will thank you by making better decisions, faster.

FAQ

Does this pattern work outside Claude Code?

Yes. Any LLM system with tool schemas in context suffers identical degradation past context limits. The specific token threshold varies by model, but the architectural pattern—resident core versus discoverable deferred—applies universally to high-tool-count systems.

How do I know if I have hit the context cliff?

Instrument tool-selection accuracy per session. If you observe agents cycling between similar tools, selecting generic over specific tools, or requiring multiple correction turns, you have exceeded your model’s in-context tool capacity.

Isn’t the search latency expensive?

A cache miss costs one turn of discovery, but carrying 74 unused tools costs attention on every single turn. We observed net latency reduction because agents complete tasks in fewer total turns without thrashing between irrelevant options.

Should I implement this for 20 tools?

Probably not. The cliff typically appears between 50–100 tools depending on schema complexity. Below 30 tools, the overhead of discovery infrastructure outweighs context savings. Above 50, the discipline becomes essential.

How do agents know what to search for?

Core tools include semantic discovery primitives. When an agent needs workflow functionality, it searches for “workflow” and receives relevant deferred tools. This mirrors human behavior—consulting documentation when specialized tools are needed.