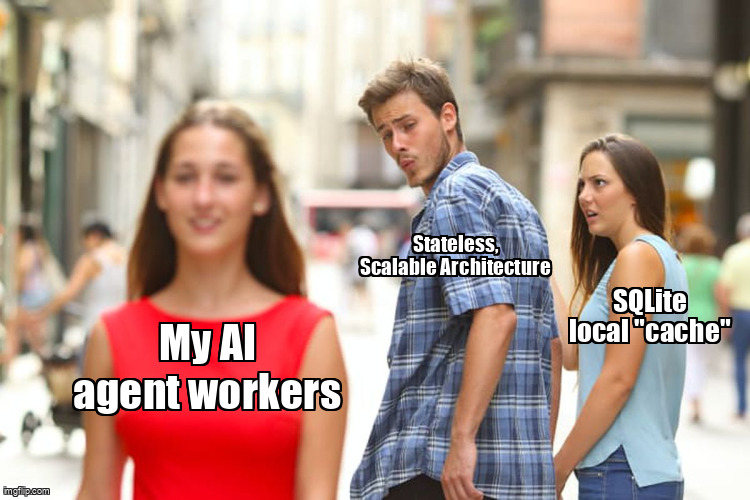

Our AI Worker Containers Have Zero Local Database And a 30-Line Bash Script That Makes It Impossible to Add One

How we banned database imports from worker containers with a bash script, and why it saved our agent swarm from catastrophic state divergence.

Three workers. Three SQLite databases. Three different beliefs about whether task #8472 was pending, in-progress, or complete.

We shipped three contradictory pull requests to the same Linear issue before anyone noticed. Each worker had “successfully” updated its own local state. None of them had checked with each other.

This is the story of why we banned database imports from our worker containers entirely—not with documentation, not with code review checklists, but with a 30-line bash script that runs in CI and fails the build if any worker tries to touch a database module.

The Incident: When “Fast Memory” Becomes Distributed Corruption

Our agent swarm architecture seemed sensible: an API server owns the task queue, workers pull tasks and execute them. But workers need context—previous actions, conversation history, cached embeddings. We added SQLite because “it’s just temporary state” and “direct SQL is faster than HTTP calls.”

The pattern was everywhere in our codebase. Workers would:

- Fetch a task from the API

- Write

UPDATE tasks SET status = ‘in_progress’to local SQLite - Begin work, periodically syncing “real” progress back to the API

This worked fine with one worker. It worked fine with two. At three workers, the race conditions started.

Worker A pulled task #8472, wrote in_progress to its SQLite, started processing. Worker B, starting 400ms later, found the task still marked pending in the API (Worker A hadn’t synced yet), pulled it, wrote in_progress to its own SQLite, and started processing. Worker C did the same.

Three LLM inference sessions. Three conflicting code suggestions. Three PRs opened against the same Linear ticket. The API server eventually received three completion notifications and had to reconcile conflicting outputs.

The debugging session was a nightmare. Each worker’s SQLite had a different timeline. The API server had a fourth timeline. We had to manually reconstruct which worker did what when, cross-referencing container logs with database timestamps that weren’t synchronized.

The Rule: Six Paths, Two Patterns, Zero Exceptions

We made a decision immediately after that incident: worker containers may not import database modules, ever. Not for caching. Not for temporary state. Not for “just this one query.”

We codified this in three layers:

- Architectural: Workers are stateless executors. The API server is the sole owner of state.

- API design: Every worker action that previously touched the database now goes through HTTP endpoints.

- Enforcement: A bash script that runs in CI and fails the build on any violation.

The forbidden zones in our worker codebase:

src/commands/—CLI commands that trigger agent actionssrc/hooks/—lifecycle hooks for task executionsrc/providers/—LLM provider integrations and tool definitionssrc/prompts/—prompt templates and context assemblysrc/cli.tsx—main entry point and command routingsrc/claude.ts—Claude-specific integration and response parsing

The two forbidden import patterns:

from ‘be/db’—our database query layerbun:sqlite—the raw SQLite driver

Pattern-based detection beats type-based enforcement because it’s trivial to verify and impossible to circumvent through type gymnastics. You either import the module or you don’t.

Why Bash, Not ESLint?

// eslint-disable-next-line @agent-swarm/no-db-import exists. ESLint rules can be bypassed with a comment. Bash grep checks the actual file content—a violation either exists or it doesn’t.We tried lint rules first. They’re elegant. They integrate with IDEs. They provide helpful error messages. And they fail exactly when you need them most—under deadline pressure, when someone really needs to “just add one quick query.”

Bash is dumb. Bash is fast. Bash checks the actual file content. A violation either exists in the file or it doesn’t. There’s no semantic analysis to bypass, no configuration to tweak, no comment directive to suppress.

Here’s the full script we run in CI:

#!/bin/bash

# scripts/check-db-boundary.sh

# Enforces: workers may never import database modules

set -euo pipefail

# Forbidden patterns

DB_MODULE="from ['\"]be/db['\"]"

SQLITE_DRIVER="bun:sqlite"

# Worker paths that must remain database-free

WORKER_PATHS=(

"src/commands/"

"src/hooks/"

"src/providers/"

"src/prompts/"

"src/cli.tsx"

"src/claude.ts"

)

VIOLATIONS=0

for path in "${WORKER_PATHS[@]}"; do

if [ -d "$path" ]; then

if grep -rE "$DB_MODULE" "$path" --include="*.ts" --include="*.tsx" 2>/dev/null; then

echo "ERROR: Database module import found in $path"

VIOLATIONS=$((VIOLATIONS + 1))

fi

if grep -rE "$SQLITE_DRIVER" "$path" --include="*.ts" --include="*.tsx" 2>/dev/null; then

echo "ERROR: SQLite driver import found in $path"

VIOLATIONS=$((VIOLATIONS + 1))

fi

elif [ -f "$path" ]; then

if grep -E "$DB_MODULE" "$path" 2>/dev/null; then

echo "ERROR: Database module import found in $path"

VIOLATIONS=$((VIOLATIONS + 1))

fi

if grep -E "$SQLITE_DRIVER" "$path" 2>/dev/null; then

echo "ERROR: SQLite driver import found in $path"

VIOLATIONS=$((VIOLATIONS + 1))

fi

fi

done

if [ $VIOLATIONS -gt 0 ]; then

echo ""

echo "Found $VIOLATIONS database boundary violation(s)."

echo "Workers must remain stateless. Use HTTP APIs instead."

exit 1

fi

echo "Database boundary check passed."Run time: ~200ms on our entire worker codebase. The CI step looks like this:

# .github/workflows/ci.yml

- name: Enforce database boundary

run: |

chmod +x scripts/check-db-boundary.sh

./scripts/check-db-boundary.shThe Replacement: HTTP-First Architecture

Every worker action that previously touched the database now goes through our API server. The surface is simple:

| Old pattern | New pattern | Latency |

|---|---|---|

db.query(‘SELECT * FROM tasks...’) | GET /api/tasks/:id | +12ms |

db.exec(‘UPDATE tasks...’) | POST /api/tasks/:id/progress | +8ms |

INSERT INTO messages... | POST /api/messages | +15ms |

SELECT * FROM context_cache... | GET /api/context/:taskId | +11ms |

Measured via internal benchmarks, 1000 requests each, p50 latency. Worker and API server co-located in the same VPC.

Workers carry two headers on every request:

// src/lib/api-client.ts

const apiClient = new Axios({

baseURL: process.env.API_SERVER_URL,

headers: {

'Authorization': `Bearer ${process.env.API_KEY}`,

'X-Agent-ID': process.env.AGENT_ID,

},

});

// All state access goes through this client

export async function fetchTask(taskId: string): Promise<Task> {

const { data } = await apiClient.get(`/api/tasks/${taskId}`);

return data;

}

export async function updateProgress(

taskId: string,

progress: ProgressUpdate

): Promise<void> {

await apiClient.post(`/api/tasks/${taskId}/progress`, progress);

}The 8–15ms overhead per call is noise compared to LLM inference time. A typical agent task involves 3–5 API calls and 2–4 LLM inferences. The HTTP overhead is under 100ms; the LLM calls are 2–30 seconds.

The Counterintuitive Benefit Nobody Warned Us About

Debugging a multi-agent failure used to require checking N container logs plus N local databases, then reconciling N parallel timelines. Now it’s: check the API database, replay the task ID.

Single source of truth makes every cross-agent investigation an order of magnitude faster. When three workers interact with a task, there’s exactly one timeline in the API database—not three timelines that have to be merged with timestamps that may or may not be synchronized.

The mental model is simple: the API server is the computer. Workers are just CPUs that execute instructions. They don’t have memory. They don’t have state. They don’t have opinions about what “should” be happening.

The Ripple Effect: What Statelessness Unlocked

Once workers became stateless, three things became trivial:

Horizontal scaling became a non-event. Any container can handle any task because they’re identical. No sticky sessions. No data migration. No “warmup” containers that have cached state. We scale by adding containers to a pool. The API server’s task queue handles distribution.

Workers became ephemeral. We run worker containers as Docker images that get killed and recreated constantly. A worker that crashes mid-task simply… stops. Its replacement pulls the same task from the queue and continues. No state to preserve. No “graceful shutdown” handler that might fail.

API design became rigorous. When you can’t take shortcuts through the database, every data access pattern needs a proper API endpoint. This forced us to design clean boundaries between concerns. The API server is now a well-documented service with explicit contracts. Workers are dumb clients that don’t need to understand database schemas.

What We Tried That Didn’t Work

Before settling on the bash script, we experimented with softer enforcement:

- Code review checklists: forgotten under deadline pressure. Reviewers don’t catch violations in 400-line PRs.

- ESLint rules with custom plugins: bypassed with

// eslint-disable. The escape hatch becomes the default during crunch. - Runtime detection: checking imports at worker startup. This catches violations late—after deployment, when the build already passed.

- Architecture Decision Records: great for onboarding, useless for enforcement. Developers don’t re-read ADRs before submitting PRs.

The bash script won because it’s the only option with zero escape hatches. You cannot bypass a grep. You cannot negotiate with a failing CI check at 2 AM when you need to ship a hotfix.

The Pattern: Apply This to Any Agent System

You don’t need our exact stack to use this pattern. The general form is:

- Identify your orchestrator (owns state) and workers (execute tasks)

- Define the boundary—which modules/paths workers may not access

- Write a dumb script that greps for violations

- Make it a merge gate in CI

- Replace direct state access with HTTP/queue messages

The enforcement mechanism matters more than the specific technology. Discipline degrades under deadline pressure; bash scripts don’t.

The Prediction: Where Agent Frameworks Are Heading

Within 12 months, every mature agent framework will ship with a default “workers must not access shared state directly” invariant. The alternative is debugging race conditions that surface as “flaky agent behavior” weeks after deployment.

Teams running 10+ concurrent agents who give each worker database access are quietly racking up state divergence bugs. They manifest as:

- Agents that “forget” constraints mentioned in previous turns

- Tasks marked complete by one agent and pending by another

- Duplicate actions executed by different workers

- Intermittent failures that can’t be reproduced with single-worker testing

These aren’t flaky agents. They’re distributed systems bugs wearing agent costumes. The fix isn’t better prompting—it’s architectural separation enforced with tooling.

When Should You Add This Rule?

What About “Just Cache Embeddings Locally”?

The Script Is the Keystone

Every architectural decision in our agent swarm traces back to that 30-line bash script. Stateless workers. Ephemeral containers. Clean API boundaries. Horizontal scaling without config changes. Debugging via single timeline replay.

The script isn’t clever. It’s not even elegant. But it’s the invariant that holds everything together, and it will outlast every engineer on the team. That’s the point. Architectural boundaries enforced with tooling survive team turnover, late-night refactors, and “just this once” shortcuts.

Your agent swarm will face the same temptation. The question is whether you’ll enforce the boundary before the incident, or after.