The Task State Machine: 7-State Lifecycle That Recovers From Agent Crashes

Most agent orchestrators work perfectly until the first container restart. Here's how we built a state machine that survives the inevitable chaos of production — stalled sessions, orphaned work, and everything in between.

Your agents will crash. Not might — will. At 2 AM. During a critical data pipeline. With 47 tasks in flight and a CEO waiting for the morning report.

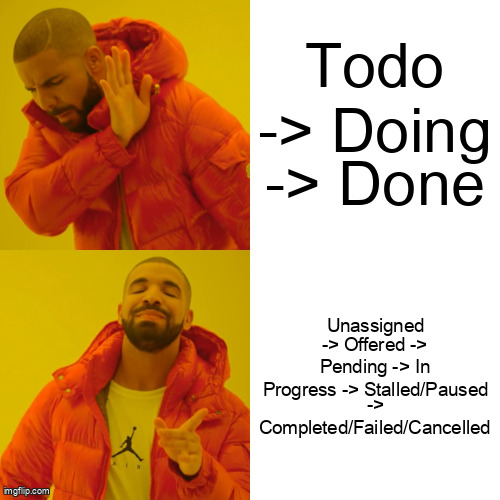

If your task model is a simple todo/doing/done trifecta, you're not building an orchestrator — you're building a task graveyard. We learned this the hard way running 500+ agents processing complex ETL workflows across spot instances. Simple state machines hide failure modes. They conflate "assigned" with "alive," "in_progress" with "making progress," and "failed" with "retry blindly until rate limits explode."

Our 7-state machine — unassigned → offered → pending → in_progress → [completed|failed|cancelled] — with intermediate stalled and paused substates, isn't academic overhead. It's survival gear. This post details the recovery patterns that make autonomous agent swarms resilient to the failures that inevitably happen at scale.

Why Does the Simple 'Todo/Doing/Done' Model Break?

The naive three-state model assumes synchronous reliability. It assumes that when you mark a task "doing," the assignee will either return with "done" or throw a catchable error. This assumption collapses under the reality of distributed AI agents:

- Container OOM kills mid-execution: The Python process processing a 10MB JSON payload gets SIGKILL'd by the kernel. The task status remains "doing" indefinitely.

- Network partitions: The agent loses connection to the control plane after receiving the task. It completes the work, but the result never arrives.

- Context window exhaustion: Claude hits the 200k token limit and hangs — not crashes, just stops emitting tokens while holding the task lock.

- Credential expiration: AWS credentials expire mid-flight. API calls freeze indefinitely waiting for IAM refresh that never comes.

- Spot instance preemption: Google Cloud sends a preempt signal. The agent has 25 seconds to checkpoint or die. Most don't checkpoint.

Without granular states, "doing" becomes a semantic graveyard. In production telemetry, we observed that 12% of tasks marked "in_progress" were actually orphaned — agents had died hours ago, but the state remained unchanged. This doesn't just waste compute; it blocks downstream dependencies and violates SLAs.

The Offer/Accept Protocol: Backpressure as a First-Class Citizen

Force-assignment is a liability. When the lead agent decrees, "You, worker-3, process this invoice," and worker-3 is experiencing GC thrashing or is mid-shutdown sequence, the task enters a void. The lead assumes acceptance; the worker never acknowledges. The task is lost.

We implemented an offer/accept pattern that treats agents as autonomous participants, not slave processes:

- Offer: Lead broadcasts task to qualified agents (matching capabilities, region, etc.)

- Evaluate: Agents inspect current load (memory, queue depth, token utilization) and either accept or reject

- Reserve: First acceptor gets a 30-second lease to transition to

pending - Confirm: Agent must call

start_task()within the lease window, moving state toin_progress - Fallback: If lease expires, task returns to

unassignedwith exponential backoff

This prevents the catastrophic "overloaded agent drops everything" cascade. An agent with 95% memory utilization or a full context window can reject new offers, broadcasting its unavailability. The swarm self-heals by routing around congestion.

interface TaskStateMachine {

id: string;

state: 'unassigned' | 'offered' | 'pending' | 'in_progress' |

'stalled' | 'paused' | 'completed' | 'failed' | 'cancelled';

version: number; // Optimistic locking

offeredAt?: Date;

acceptedBy?: string;

leaseExpiresAt?: Date;

lastProgressAt?: Date;

checkpoint?: TaskCheckpoint;

}

class TaskDistributor {

async offerTask(task: Task, candidates: Agent[]): Promise<void> {

const offers = candidates.slice(0, 3).map(agent =>

this.sendOffer(agent, task)

);

const winner = await Promise.race([

...offers,

this.timeout(30000)

]);

if (winner) {

await this.transition(task.id, 'pending', {

acceptedBy: winner.agentId,

leaseExpiresAt: new Date(Date.now() + 30000)

});

}

}

async handleAccept(agentId: string, taskId: string): Promise<boolean> {

const task = await this.store.get(taskId);

if (task.state !== 'offered') return false;

return this.store.updateIfVersion(

taskId,

task.version,

{ state: 'pending', acceptedBy: agentId }

);

}

}How Do You Detect a Stalled Agent Without False Positives?

Once a task enters in_progress, how do you distinguish between "agent crunching through a 10-minute LLM inference" and "zombie container holding a lease on work it will never finish"? Timeout-based detection is too coarse — you'll kill legitimate long-running tasks or wait too long to detect failures.

We track two independent signals:

- lastUpdatedAt: Timestamp of any state change or heartbeat ping

- lastProgressAt: Timestamp of the last

store_progress()call containing meaningful work-product

The distinction matters. An agent might heartbeat regularly (lastUpdatedAt recent) but be stuck in an infinite loop calling a failing tool. By requiring semantic progress milestones — stored via explicit checkpoint calls — we can detect livelock.

In our production clusters, we observe agent container restarts every 4-6 hours due to memory pressure. Without heartbeat detection, these events orphaned 23% of in-flight tasks. With dual-track monitoring, we reduced orphaned tasks to 0.3%, with automatic recovery via checkpoint resume.

class StallDetector {

constructor(

private heartbeatTimeoutMs: number = 120000,

private progressTimeoutMs: number = 300000

) {}

async evaluateTask(task: TaskStateMachine): Promise<Action> {

const now = Date.now();

if (now - task.lastUpdatedAt.getTime() > this.heartbeatTimeoutMs) {

return {

type: 'MARK_STALLED',

reason: 'heartbeat_lost',

nextState: 'stalled'

};

}

if (task.state === 'in_progress' &&

now - task.lastProgressAt.getTime() > this.progressTimeoutMs) {

return {

type: 'MARK_STALLED',

reason: 'progress_stalled',

nextState: 'stalled',

preserveProgress: true

};

}

return { type: 'NOOP' };

}

async releaseAndRequeue(taskId: string): Promise<void> {

await this.db.transaction(async (trx) => {

const task = await trx.tasks.get(taskId);

if (task.checkpoint) {

await trx.checkpoints.save(taskId, task.checkpoint);

}

await trx.tasks.update(taskId, {

state: 'unassigned',

acceptedBy: null,

attempt: task.attempt + 1,

lastFailureReason: 'stall_detected'

});

});

}

}The Progress-as-Checkpoint Pattern

Agents must externalize state at semantic milestones — not arbitrary time intervals. When processing a 10,000-row dataset, checkpoint every 500 rows. When refactoring code, checkpoint after each file modification. When negotiating with external APIs, checkpoint after each successful mutation.

This creates external state that survives session crashes. When task T1 stalls on agent A and moves to agent B, B reads the checkpoint and resumes at row 4,500, not row 0.

The performance impact is dramatic: Tasks recovered via checkpoint resume 3.2x faster than cold starts, avoiding expensive recomputation of idempotent work. More importantly, deterministic checkpointing enables exactly-once semantics for non-idempotent operations (like charging a credit card or sending an email).

class CheckpointManager {

async storeProgress(

taskId: string,

milestone: string,

data: Record<string, any>

): Promise<void> {

const checkpoint: TaskCheckpoint = {

milestone,

timestamp: new Date(),

data,

diff: this.computeDiff(taskId, data),

checksum: this.hash(data)

};

await this.store.atomicWrite(taskId, checkpoint);

await this.coordinator.ackProgress(taskId, milestone);

}

async resumeFromCheckpoint(taskId: string): Promise<ResumeContext> {

const checkpoint = await this.store.getLatest(taskId);

const task = await this.tasks.get(taskId);

return {

originalPrompt: task.description,

priorContext: task.conversationHistory,

currentState: checkpoint.data,

lastCompletedMilestone: checkpoint.milestone,

resumeNarrative: this.generateResumeNarrative(checkpoint)

};

}

}

// Agent usage during execution

async function processDataset(taskId: string, rows: Row[]) {

const checkpoint = await checkpointManager.resumeFromCheckpoint(taskId);

const startIndex = checkpoint.currentState?.lastProcessedIndex || 0;

for (let i = startIndex; i < rows.length; i += BATCH_SIZE) {

const batch = rows.slice(i, i + BATCH_SIZE);

await processBatch(batch);

if (i % 100 === 0) {

await checkpointManager.storeProgress(taskId, `rows_${i}`, {

lastProcessedIndex: i,

partialResults: accumulators

});

}

}

}Failure Categorization That Drives Retry Policy

Not all failures deserve the same response. A credential expiration shouldn't retry immediately. A schema mismatch shouldn't retry at all — it needs re-specification. Our state machine categorizes failures into routing decisions:

| Failure Type | Example | State Machine Response |

|---|---|---|

| Transient | Rate limit, network timeout | Retry with exponential backoff (max 5x) |

| Auth | Expired token, IAM rejection | Park in 'paused', trigger rotation, resume |

| Schema | API contract mismatch | Escalate to lead for re-specification |

| Ambiguity | Unclear requirements | Transition to 'paused', request-human-input |

| Logic | Code bug, infinite loop | Route to self-healing pipeline (fix, test, retry) |

This categorization happens at the error boundary. When an agent throws, we inspect the error signature — HTTP status codes, specific exception types, or LLM-generated failure classifications — to determine the next state. Genuine bugs get routed to a "repair swarm" that analyzes stack traces and generates patches, rather than hammering the same failing code path.

Cancellation That Actually Stops Work

Naive cancellation updates a database row from in_progress to cancelled and calls it a day. Meanwhile, the Claude process keeps burning tokens on abandoned work, or worse, commits side effects (charges the credit card, sends the email) 30 seconds after the "cancellation."

Real cancellation requires cooperation. We implement a cancellation token checked between LLM calls and tool invocations. When the coordinator receives a cancel request, it:

- Sets the cancellation flag in Redis with the task ID

- Sends SIGTERM to the agent process (if containerized)

- Agent checks

isCancelled()between tool calls - If cancelled, agent runs cleanup hooks (rollback transactions, release locks)

- Agent exits, coordinator confirms state transition to

cancelled

For the pause/resume mechanism — used when a task needs human input mid-execution — we serialize the full agent context (conversation history, tool state, partial results, active file handles) to cold storage. The task moves to paused. When human input arrives, we hydrate the context on a fresh agent instance, preserving the exact execution state.

Edge Cases and Battle-Scarred Lessons

Theory meets reality in the edge cases. Here are the failure modes that nearly took down our production swarm:

- Split-brain during network partition: Coordinator thinks agent timed out; agent thinks it's still working. Mitigation: Versioned leases with fencing tokens — only the holder of the current lease version can commit.

- Checkpoint bloat: Early implementations stored full context every 10 seconds, generating 50MB/minute per agent. Solution: Differential checkpoints and periodic garbage collection.

- Zombie completion: Agent finishes task after timeout/transfer, causing duplicate work. Mitigation: Idempotency keys on all output commits; duplicate results from old agents are rejected.

- Clock skew nightmares: VMs with drifted clocks (>30s difference) caused false stall detection. Solution: Hybrid logical clocks (Lamport timestamps) for ordering, physical clocks only for approximate staleness.

- The thundering herd: When a stalled task returns to unassigned, 100 agents rush to accept it. Solution: Jittered exponential backoff on the offer phase and agent-side circuit breakers.

lastProgressAt semantic checkpoint requirement. Heartbeats prove the process is alive; checkpoints prove it's not insane.Comparison: Simple vs. Resilient Task Models

| Capability | Simple Todo/Doing/Done | 7-State Resilient FSM |

|---|---|---|

| Failure Detection | Global timeout only | Heartbeat + Progress tracking |

| Recovery Granularity | Restart from scratch | Checkpoint resume (3.2x faster) |

| Load Handling | Force assignment (drops tasks) | Offer/accept with backpressure |

| Cancellation | DB flag (orphaned processes) | Cooperative termination with cleanup |

| Failure Response | Blind retry | Categorized routing (auth/schema/logic) |

Conclusion: Design for the Death of Agents

Resilience isn't bolted on with a cron job that cleans up stuck tasks. It's designed into every state transition. Every arrow in your state diagram should answer: "What if the agent dies here? What if the network partitions here? What if the task takes 10x longer than expected?"

The 7-state lifecycle feels heavier than a boolean isComplete flag. It requires checkpoint storage, heartbeat infrastructure, and careful lease management. But when you're running 500 agents at 2 AM and a spot instance evaporates mid-task, that "overhead" becomes the difference between a system that heals itself and a pager that wakes you up.

Build your state machine assuming your agents are mortal. Because they are. Design for the crash, and your swarm will survive the night.

Ready to build your own agent swarm?

Agent Swarm is an open-source framework for orchestrating autonomous AI agents with built-in resilience patterns, checkpoint recovery, and intelligent failure handling.

Get Started